Stake: don’t show RAG answers to your CFO or clinicians without sources and measurable confidence.

RAG is useful until it invents numbers your finance team will act on. Our position: every RAG-powered output that affects money, compliance, or patient care needs explicit citations, a confidence gate, and monitoring that measures hallucination rate against an auditable threshold (we recommend <0.5% for finance/clinical outputs).

Why RAG trips enterprise stakeholders

RAG fails when retrieval is noisy or the prompt mixes distant context. For a CFO, a fabricated revenue figure is both embarrassing and costly; for a clinician, a wrong dosage is dangerous. Three common failure modes:

- Bad retrieval: irrelevant documents or stale data returned because of poor metadata filtering or embeddings drift.

- Overconfident LLM: the model fills gaps with plausible-sounding but false facts.

- Missing provenance: no link back to the source document, so humans can’t verify claims.

Operational constraints for enterprise RAG:

- Auditable provenance: every claim must link to a doc ID and text span.

- Numeric thresholds: set a maximum allowable hallucination rate (we use 0.5% for finance/clinical pipelines; for lower-risk support bots 1–2% may be acceptable).

- Latency & cost budgets: balance retrieval depth with token costs (e.g., top_k 5–10, rerank top 50).

Tools we pick: Pinecone (managed scale), Milvus (self-host), OpenAI/Cohere embeddings, LangChain orchestration, plus monitoring with Arize/MLflow.

Choosing the right vector store and embeddings

Pick the vector store for the scale and operational constraints you own.

| Need | Managed | Self-host / On-prem | Notes |

|---|---|---|---|

| Production, minimal ops, multi-region | Pinecone | — | Pinecone handles 100M+ vectors, automatic scaling, and metadata filters. Good choice for enterprise SaaS teams. |

| On-prem or data-residency | — | Milvus | Milvus supports large-scale self-host, GPU acceleration, and tight compliance. Requires ops expertise. |

| Hybrid with schema & knowledge graphs | Weaviate | Weaviate | Built-in vector + graph search; useful when semantic relationships matter. |

Embeddings: OpenAI embeddings are the pragmatic default for English business text (good performance, managed), Cohere is a close second with competitive pricing, and SentenceTransformers (self-host) for private deployments. Practical rules:

- Use the same embedding model across ingestion and query. Mixing providers increases drift.

- Re-embed on schema changes or quarterly when your corpus changes >10%.

- Store both vector and sparse metadata (doc_id, source_uri, timestamp, section).

Orchestration patterns that reduce hallucination 🔁

Two patterns have worked in production: hybrid-search + rerank, and citation-first retrieval.

Hybrid-search + rerank (our default):

- Step 1: Sparse filter (SQL/Elasticsearch) by metadata to limit search scope (e.g., fiscal-year, patient-id).

- Step 2: BM25 for keyword recall, return top 200 document IDs.

- Step 3: Dense vector search over the filtered set, return top_k 50.

- Step 4: Cross-encoder or semantic reranker (use small transformer) to pick top 5–10 passages.

- Step 5: Pass those passages with explicit citations to the LLM. Block if no passage passes a relevance threshold.

Citation-first retrieval (for finance/clinicians): always surface the top 3 citations, with verbatim snippets and doc links. The LLM can only quote from provided snippets; if answer requires synthesis across docs, require human review.

Simple ASCII architecture that we use in production:

[Ingest: PDFs / DB / CRM] -> [Extract: OCR/NER] -> [Chunk & embed: OpenAI/Cohere] -> [Vector DB: Pinecone / Milvus]

|

v

[Query] -> [Metadata filter] -> [BM25 + Dense Search] -> [Reranker (cross-encoder)] -> [Top-5 passages + citations]

|> [Grounding / Citation block]

v

[LLM prompt (system + citations)] -> [Answer + provenance]

v

[Confidence gate -> UI or Human-in-loop]

We use LangChain to orchestrate these steps (Retriever chaining + custom reranker hook). For model calls, we prefer OpenAI or Anthropic depending on safety profiles.

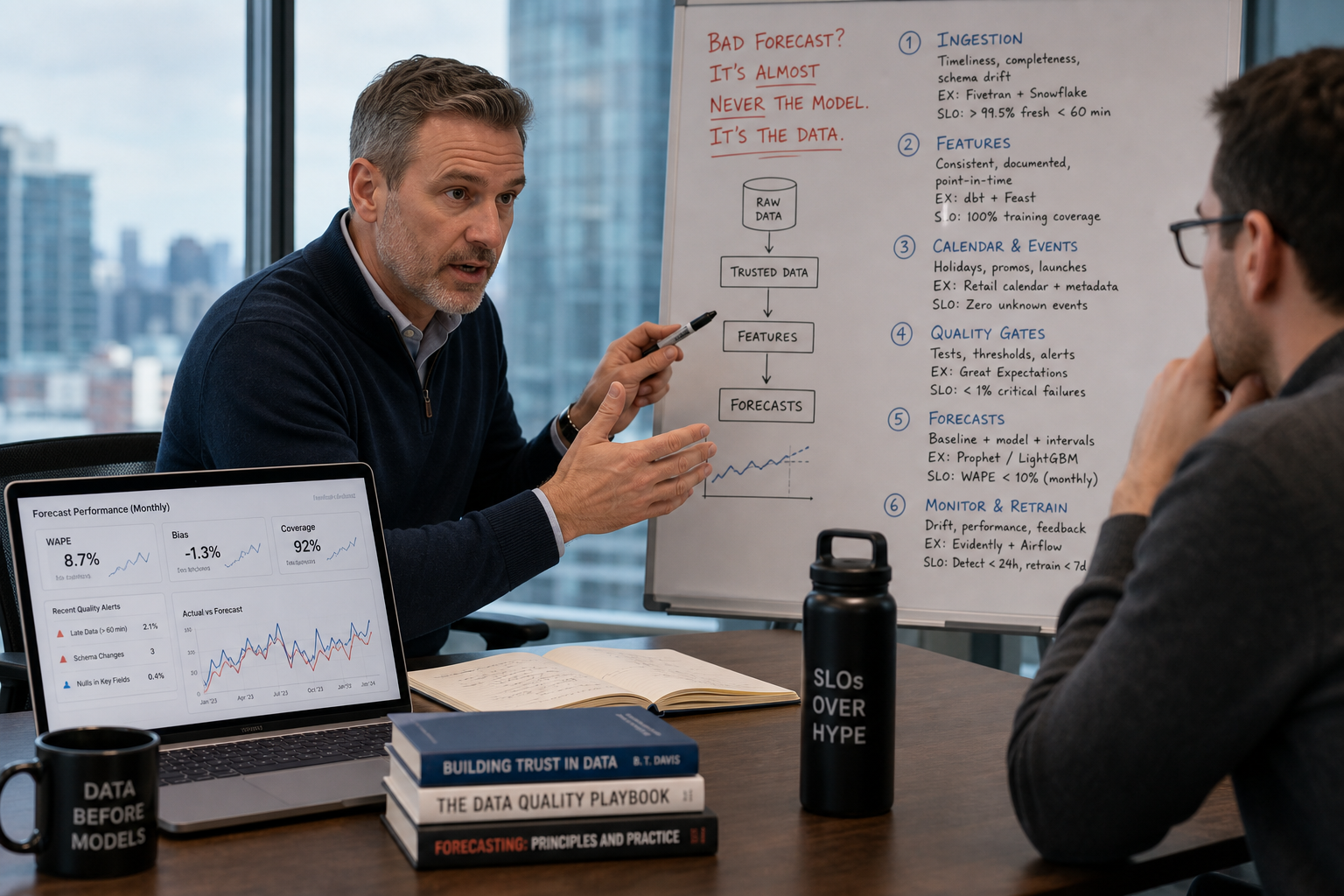

Evaluation metrics and monitoring

You can’t operate RAG without metrics. Measure at retrieval and at output.

Retrieval metrics (automated):

- Retrieval recall@k (how often the ground-truth doc is in top_k). Target: >95% at k=50 for high-risk flows.

- Passage-level precision (relevant passages / returned passages). Target: >90%.

Output metrics (human+automated):

- Hallucination rate = hallucinated answers / sampled answers. Goal: <0.5% for finance/clinical, measured on 1,000-sample audits weekly initially.

- Citation match rate = percent of claims that link to an explicit source snippet. Target: ≥99% for finance/clinical.

- Factual precision (human-labeled). Aim: >99% on high-risk claims.

Monitoring & tooling:

- Data quality gates with Great Expectations before embedding.

- Model and prediction drift tracked with Arize and custom MLflow experiments.

- Deployment using Seldon or SageMaker for canarying model versions and rolling back on metric regressions.

When a metric breaks (e.g., hallucination rate > threshold), automatic protection: downgrade to conservative model, route outputs to human review, and flag ingestion issues.

Citation strategies & UI patterns that protect finance and clinicians

For CFOs and clinicians the UI is the guardrail.

- Show the claim, then list 1–3 source snippets with doc_id, page, and sentence span.

- Add a confidence badge (High / Medium / Low) based on retrieval score + model logits.

- Provide a single-click “verify” that opens the source doc at the exact paragraph.

- For any numeric claim, require a structured provenance object: { claim, source_doc_id, source_span, retrieval_score }.

Policy example: any answer with a confidence < 0.85 or missing a citation must be routed to a human reviewer before downstream systems consume it (ERP entry, clinical order, etc.).

Live example: support RAG that cut tickets 75% and kept hallucinations <0.5%

Client: mid-market SaaS support center. Problem: slow KB search, high ticket volume, incorrect self-help responses.

What we built at Niche.dev:

- Ingested docs: KB articles, release notes, and transcripts into Pinecone with OpenAI embeddings.

- Retrieval: LangChain orchestration with BM25 prefilter, Pinecone dense search, and a cross-encoder reranker.

- Answering: LLM prompted only with top-5 passages; UI showed citations and a confidence bar.

- Monitoring: Weekly human audits on 1,000 answers tracked in MLflow; Arize tracked drift.

Outcome: support tickets deflected by 75% and live hallucination rate measured at 0.45% (below our 0.5% threshold). Measurables returned: hours saved (support team time reduced by 2.8 FTE-equivalents), faster MTTR, and higher CSAT. The outcome is an Niche.dev engagement outcome tied to a voice & document intelligence implementation.

Implementation checklist (quick)

- [ ] Define hallucination budget and SLAs (0.5% for finance/clinical).

- [ ] Choose vector store: Pinecone (managed) or Milvus (self-host).

- [ ] Standardize on one embedding provider (OpenAI or Cohere).

- [ ] Implement hybrid search + reranker via LangChain.

- [ ] Add citation-first UI and required confidence gates.

- [ ] Put monitoring in place: Great Expectations, MLflow, Arize, Seldon.

- [ ] Run weekly human audits on 1,000-sample slices for 12 weeks, then monthly.

Suggested Internal Links

- Enterprise AI Strategy: How to Successfully Integrate AI Into Your Business Workflow — synthetic://cmouha5dg0000mh0fg9jxfbt2/indexed-content/niche-dev/enterprise-ai-strategy.md

- How to Audit Your Data Before Starting an AI Project — synthetic://cmouha5dg0000mh0fg9jxfbt2/indexed-content/niche-dev/data-audit-ai.md

- The Role of MLOps in Scalable AI Systems — synthetic://cmouha5dg0000mh0fg9jxfbt2/indexed-content/niche-dev/mlops-enterprise.md

Conclusion & CTA

Need help with RAG for the enterprise? Book a free strategy call with Niche.dev.